- Executive Director’s Note

- Center Augments Core Surveys With Special-Focus Items

- Stories From the Field: Southcentral Kentucky Community and Technical College (KY)

- Student Voices: On Guided Pathways

- A Conversation With William Law, Center National Advisory Board Chair

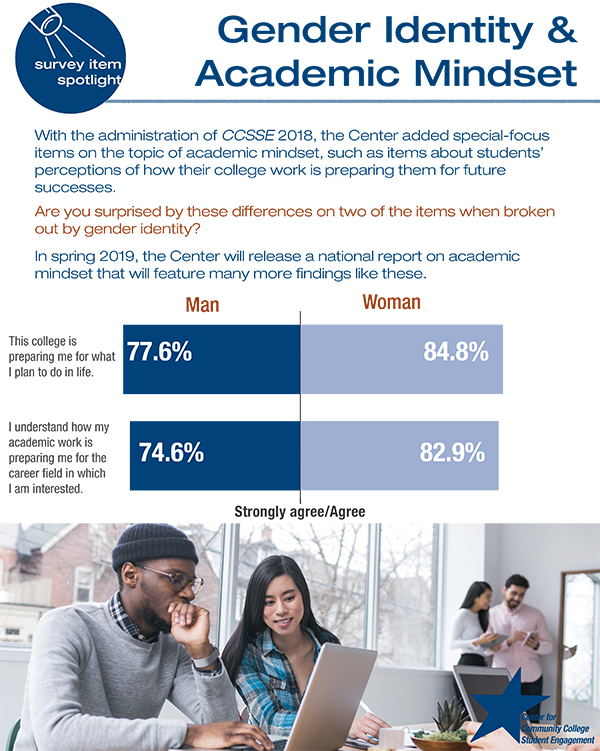

- Survey Item Spotlight: Gender Identity & Academic Mindset

- Featured Tool: The Data Narrative Exercise

- Center on the Road

Executive Director’s Note

September 2018

Across the country, colleges are redesigning for guided pathways, and as the new academic year begins, the future is bright. Here at the Center we are excited because this fall with the Survey of Entering Student Engagement (SENSE) and next spring with the Community College Survey of Student Engagement (CCSSE), we will be collecting data on how students are experiencing guided pathways. Knowing that many colleges started the redesign work with academic advising, these new data will support and amplify the recent data colleges collected on advising.

As colleges redesign the student experience, it’s key to hear from students to determine if they are actually having the experience that was designed for them. These new data will help colleges know where the design is working and where improvement is needed. Let’s stay tuned to hear what students are saying!

You can take a peek at the guided pathways items and others we are currently developing in the next article on upcoming Center-developed special-focus items.

Center Augments Core Surveys With Special-Focus Items

In order to keep the Center’s surveys fresh and relevant for the community college field, the Center selects a “special-focus” topic each year and develops new item sets that enable colleges to explore more deeply certain issues that are key to improved student engagement and student success. These item sets are free for participating colleges to administer. With the 2018 Community College Survey of Student Engagement (CCSSE) administration, the Center added items on the topic of Academic Mindset to augment the core instrument. Over 80,000 students across 160 colleges responded to these items. This item set asks students questions about things such as whether they believe they can learn all of the material being presented in their courses and whether they understand how their academic work is preparing them for the career field in which they are interested. Center research staff is at work now analyzing the responses from these data, and the results will be featured in a spring 2019 report.

In the Survey of Entering Student Engagement (SENSE) that colleges are administering across the country this fall, the special-focus items are on the topic of Guided Pathways. The special-focus items that will be added to CCSSE 2019 will also be on the topic of Guided Pathways. This item set asks students questions such as whether a staff member at the college has talked with them about the types of jobs their pathway of study might lead to and whether a staff member at the college has talked with them about the overall process for transferring to a four-year institution.

Center staff is currently at work creating a special-focus item set on The Working Learner. These items will be added to the SENSE 2019 and CCSSE 2020 administrations, and they will explore connections between students’ work for pay and its effect on their progress in meeting their academic goals.

Stories From the Field: Southcentral Kentucky Community and Technical College (KY)

Engagement: Good for Students, Good for Industry

Work ethic ... being on time, teamwork, use of personal technology, and completing a full day’s work. Have you had a conversation with industry about today’s work ethic decline? We think every college has.

In 2010, Southcentral Kentucky Community and Technical College (SKYCTC) was using CCCSE data to enhance student engagement when industry began expressing concerns about declining work ethic. The College’s dilemma became, “How can academia simultaneously improve student academic outcomes and students’ work ethic and related soft skills, both of which emanate from values deeply engrained in students’ worldviews?” Ironically, CCCSE research has shown that the same issues industry is concerned with are also integral to student engagement—things like showing up for class, teamwork, and completing coursework. This was a transformational epiphany: Classroom engagement practices mirroring behavioral expectations found in productive companies could improve both student outcomes and work ethic for industry. With the phrase “Students don’t do optional” resonating with many faculty, SKYCTC’s Workplace Ethics Agreement (WPA) was created.

SKYCTC’s WPA reinforces values of quality teaching and learning by elevating classroom standards and expanding the definition of student success beyond the traditional academic grade scale. When students come to class on time and act professionally, teaching and learning is more effective. When students are present, are prepared, and participate in class, their success increases. Students quickly learn that classrooms are preparing them to become better employees, as well as successful students.

Behavioral expectations within SKYCTC’s WPA were placed on all on-campus syllabi, and faculty explained the importance of certain industry expectations, particularly punctuality, participation, hard work, and professionalism. Sick leave and vacation policies are generally very well-defined by employers, and violation of these policies could result in dismissal. Similar expectations were incorporated into the classroom.

Now in its eighth academic year, SKYCTC’s WPA has had a positive impact on student success, enrollment, retention, graduation, and public perception of the college. Though SKYCTC has experienced yearly increases in enrollment and federal aid awarded, there has been a decrease of nearly 40% in Return-to-Title IV funds. The graduation rates of Pell-eligible students (lower income) and minority transfer students have surpassed the graduation rates of other student populations.

In addition to student success, industry leaders report that SKYCTC students are better prepared for the workplace and score newly hired graduates higher on both work quality and work attitude. In the community, economic development officials showcase SKYCTC’s WPA as evidence of how SKYCTC is responding to workforce needs.

Dr. Maggie Shelton

Provost

Dr. Phil Neal

President/CEO

Student Voices: On Guided Pathways

In spring 2018, Center staff facilitated focus groups with students at several colleges implementing Guided Pathways. The Center is currently collecting survey data and plans to release a national report on guided pathways in spring 2020. Many additional resources can be found on the Guided Pathways Resource Center.

Listen in to hear what students are saying about their experiences with the four components of the pathways journey.

|

Component One: Clarify the Path

|

|

Component Two: Get on the Path

|

|

Component Three: Stay on the Path

|

|

Component Four: Ensure Students Are Learning

|

A Conversation With William Law, Center National Advisory Board Chair

William Law, former president of St. Petersburg College (FL) and new Center National Advisory Board Chair, recently shared the following in a conversation with Center Executive Director Evelyn Waiwaiole.

Will you tell us about your background?

I started my community college career as Vice President for Institutional Planning at St. Petersburg Junior College (FL) in January of 1981. In that role, I had the good fortune to be part of the impact of the microcomputer revolution on the classroom experience and the student learning process. I watched the evolution of classroom teaching, textbooks, out-of-class learning, and faculty professional development as the technology became faster, easier to use, and more ubiquitous in college learning.

In late spring of 1988, I was selected as president of Lincoln Land Community College in Springfield, Illinois, where I replaced the founding president who had served for more than 20 years. By this time, accreditation agencies had begun to move beyond “input” metrics to the range of performance metrics, data, and information that had not been previously collected, organized, and displayed. At the same time, the focus of accreditation had shifted to include an institutional self-assessment plan by which the college had to document the means it employed to collect, share, and develop plans to address the impacts of its activities, rather than the comparative rank of input the amount of resources put into each program.

After four years in Illinois, I was selected to be the founding president of Montgomery College (now Lonestar Montgomery) in suburban Houston. I had the freedom to design the facilities at the new college and to organize the academic and student support programs. The single most impactful learning trend at the time was the creation of learning centers on each campus where students could go when not in class to access more powerful and focused electronic learning, while at the same time be given immediate access to learning support and tutors to assist them in their study efforts.

At Montgomery College, we had our first experience with the use of CCSSE—the Community College Survey of Student Engagement. Indeed, Montgomery College served as a pilot site to field test the survey instrument and to refine its administration process. In 2002, I returned to Tallahassee (I had gotten my graduate degrees from Florida State University) to become the president of Tallahassee Community College (TCC). At TCC, not a great deal of work had been done in the movement toward assessing student learning for all students. The college had a great deal of data on student demographics, but very little data beyond anecdotes of the effectiveness of teaching and the impact on students. (I might add that the anecdotal information was impressive—TCC students went on to the university system and did quite well!) By now, the higher education industry was in full disruption trying to balance the impacts of evolved accreditation, greater diversity, higher public accountability, and stunning changes in the use of technology both in and out of classes. The challenges to convey and sort out all of the interactions of these observable trends caused a great deal of anxiety for the classroom instructors and all who were responsible for supporting students on a day-to-day basis.

My final professional step came in June of 2010 when I was selected as the president of St. Petersburg College, among the very first community colleges in America to add baccalaureate education to a long history of associate and certificate programs. Assessing the progress of students as they moved through the institution became a paramount challenge for the college. Trying to identify documentable patterns of success/failure, managing the ever-tightening financial aid support, and—most importantly—maintaining and expanding the faculty’s control in the classroom setting proved high order challenges to academic leaders and to college trustees.

How did the institutions you led use Center data?

My graduate school background was in the field of Institutional Research, so I had a natural affinity for clear, concise, and reliable data. Before committing to pilot and use the CCSSE survey, I reviewed each of the questions contained in the form to be certain that the responses would be useful, understandable, and supportable from other research. I knew that we would have in-depth discussions about why an item was included in the survey, how the responses would be portrayed, and what differences in student learning responses would imply, both individually and collectively. The CCSSE survey questions were meticulously researched from other student performance studies and were clearly able to withstand any contentions of bias, reliability, or accuracy. This seemed vital if we were to have faculty support the use of the survey and if we were going to use the results to advise budget decisions and college policy.

At three institutions I led, the process was quite similar. Faculty leadership were engaged with assessing how best to assemble factual, reliable data on student progress through the institution. We asked faculty to examine the scope and nature of the CCSSE survey and to determine if the results could give strong guidance to strengthening areas where students seemed to be having the most difficult times. Prior to the administration of the survey, I would write a letter to each of the faculty who were being asked to give up a class period to allow for the administration of the survey. I would emphasize to them that the CCSSE survey was the only commitment I would seek that took away from their classroom time; the need to better understand how our students made progress through the institution was paramount. To their collective credit, no faculty ever complained or declined to participate!

At all levels, and at all times, we had a complete commitment to transparency in sharing the results of the survey with all members of the college. In fairness, these three institutions (Montgomery College, Tallahassee Community College, and St. Petersburg College) were strong performers in student achievement, a fact that mitigated the sense that the CCSSE survey would be used for manipulative or heavy-handed purposes.

Annual survey results would be distributed as soon as they were received, and leadership discussions—both formal and informal—would ensue on what we perceived the data was “telling us.” The results would be summarized and presented at a monthly meeting of the college’s board with early recommendations of how the results could be included in the next round of institutional planning and budgets. Addressing the findings of the CCSSE survey became the primary (non-legislative) driver of budget decisions.

What benefits do you see for colleges utilizing Center data for institutional improvement?

The commitment of an institution to use CCSSE survey data as a key element in institutional planning and budgeting provides an institution with “more lift, less drag.” The results are unassailable—proven research-based questions, tightly structured administration, and hundreds of other similar institutions in the findings. Individual faculty and counselors are not identified or singled out as particularly strong or particularly weak. The nature of the survey questions provides impetus to early strategies that can be adopted by faculty—individually and collectively—to address and strengthen the experience of students as they enter, proceed through, and exit the institution.

CCSSE also provides a means for a college to compare its results with other similar colleges of their own choosing. Participating colleges are not “rank ordered” to determine who is the best (or the worst!). Colleges can prioritize their internal energies to address areas of need or to change the experiences of students as evidenced in the survey results. Programs and services do not feel singled out, but rather feel supported in the ongoing challenges to improve the student experience.

The most common response to the survey results is one of “I thought that was the case.” Most of the CCSSE findings are not a surprise to the institutional participants. The presentation of the findings does, however, impel strong discussion about how to address the evidenced needs. Rarely do the results fall to only one department or program. One of the biggest benefits of using CCSSE is that the student support professionals find common ground with the instructional faculty in their mutual efforts to guide students to successful completion of their programs.

Featured Tool: The Data Narrative Exercise

Colleges that participate in SENSE and CCSSE have access to large data sets representing the voices of their students. After receiving survey results, the challenge is to use those data in a meaningful way—one that promotes positive change. The Center has found that a data narrative approach, a data sharing method that encourages in-depth discussions around just a few simply stated data-points, encourages deeper and more thoughtful data-informed conversations across a variety of audiences.

The data narrative approach uses data to tell a story—a story that develops and takes shape as data are shared and discussed. The premise is to present groups with a series of simply stated, related data points, with each data point being shared one at a time. After each data point is revealed, groups spend time discussing what the data point means to them in terms of their own work at the college. As group members talk about the meaning behind the data, a storyline develops, making the data more relevant and more relatable. Each data point is meant to build on the other, at times challenging members to take an honest look at their own roles in the college student experience.

The data narrative approach can be used to either introduce Center data to various campus stakeholders or to augment existing conversations focused on student success. To facilitate the latter, the Data Narrative Exercise, available on the CCSSE and SENSE tools pages, includes an index that maps specific survey items to various topics of potential interest. To empower participants, colleges may consider providing copies of frequency reports for the whole survey, which can refine the narrative being developed and promote further meaningful data-informed conversation across campus.

Center on the Road

Center staff have facilitated many workshops, webinars, and conference sessions throughout 2018. Featured topics have been academic advising, guided pathways, developmental education, student enrollment patterns, and the front-door experience.

University of Hawaii Community Colleges-Hawaii Student Success Institute

March 26-28, 2018

Evelyn Waiwaiole, Executive Director, Center for Community College Student Engagement

Tonjua Williams, President, St. Petersburg College (FL)

Myndi Swanson, College Liaison, Center for Community College Student Engagement

Hartnell College (CA) Workshop

April 13, 2018

Willard Lewallen, Superintendent, Hartnell College (CA)

Linda García, Assistant Director of College Relations, Center for Community College Student Engagement

Michael Poindexter, Vice President of Student Services, Sacramento City College (CA)

In the fall, Center staff will present at several national, regional, and statewide conferences, including

Community College of Vermont State Workshop

October 5

SAIR (Southern Association for Institutional Research)

October 6-9

NCCCS Conference (North Carolina Community College System)

October 7-9

AIRUM Conference (Association for Institutional Research in the Upper Midwest)

November 1-2

MCCA 54th Annual Convention & Tradeshow (Missouri Community College Association)

November 7-9

ASHE (Association for the Study of Higher Education)

November 14-17

If you are interested in Center staff facilitating a workshop or professional development activity at your college, please contact info@cccse.org.